Filter by topic and date

IETF 125 Highlights

19 May 2026

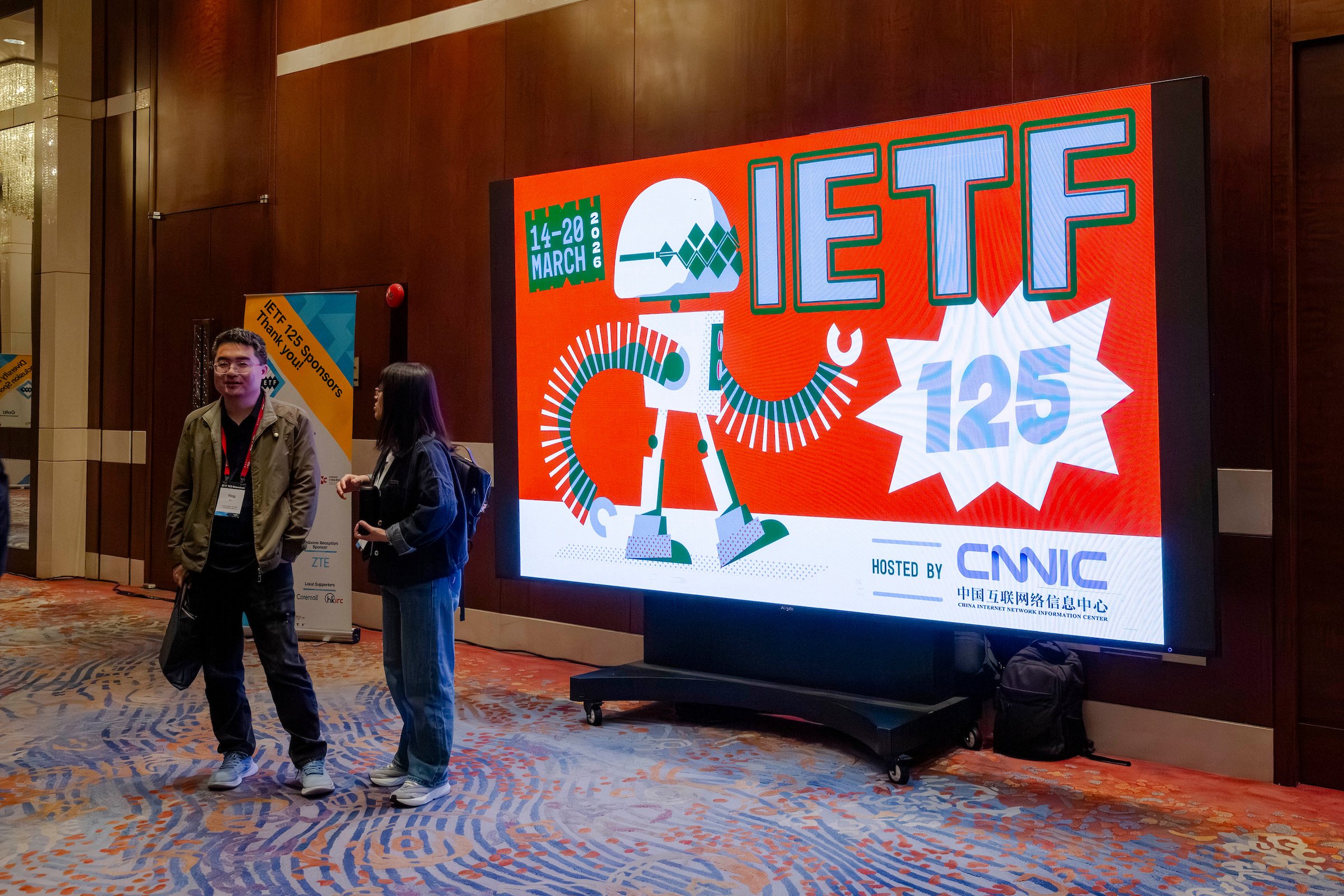

More than 1500 participants gathered in Shenzhen and online for the IETF 125 meeting 14-20 March 2026 for more than 100 working sessions, an IETF Hackathon, and more.

With so much happening during an IETF Meeting, it is not possible to follow everything that happens during the week. The IETF 125 proceedings provide materials, notes, and full recordings from every session. For more photos from the meeting, see the IETF 125 Shenzhen photo gallery.

The "Suggested IETF 125 Sessions for Getting Familiar with New Topics" post provided a brief introduction to sessions that might be of particular interest to those new to the IETF, or those looking to get involved in IETF work.

More than 270 people registered to participate in the IETF Hackathon in Shenzhen to collaborate on dozens of projects.

The Technology Deep Dive session on Routing Security provided an approachable update on the ongoing work around the important topic of routing security from a panel of experts.

Thanks to the generous support of IETF Global Host Huawei, IETF 125 meeting participants in Shenzhen enjoyed the exceptional social event.

As usual, the plenary session included updates on various administrative and operational topics, and provided an opportunity members to hear directly from the Internet Engineering Steering Group (IESG), the Internet Architecture Board (IAB), and the IETF Administration LLC Board of Directors.

Two Internet Research Task Force (IRTF) Open sessions during IETF 125 included Applied Networking Research Prize (ANRP) presentations and discussing research challenges for the IRTF at the intersection of AI systems and internetworking.